sequence

16 Oct, 2023

One really interesting behavior that I came across tinkering around with GPT-3.5+ models is its ability to call functions.

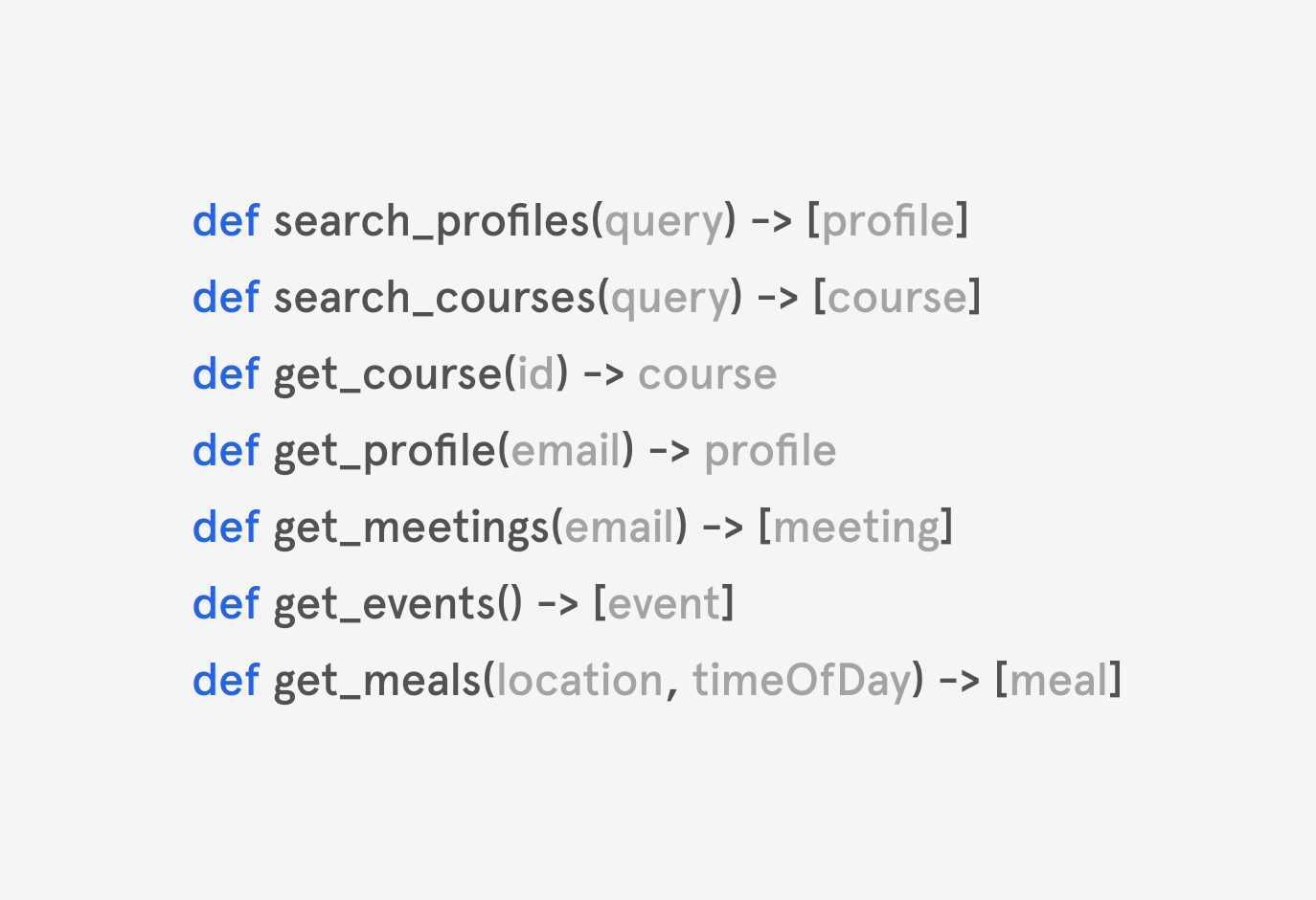

When you provide the model with a description of functions in your codebase, it can figure out which function to call based on conversation.

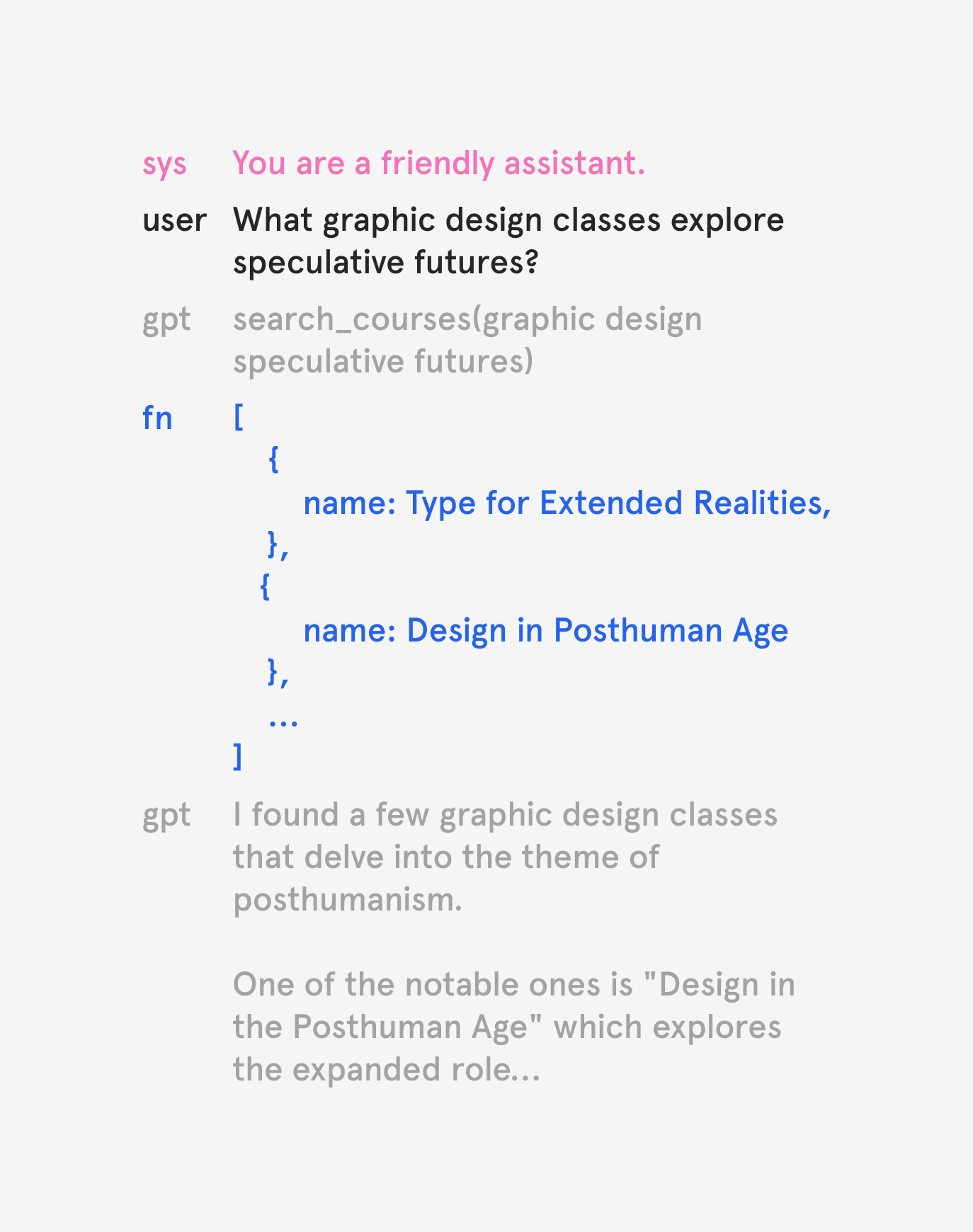

So when I ask about courses, it calls the search function with the appropriate query to come up with an answer.

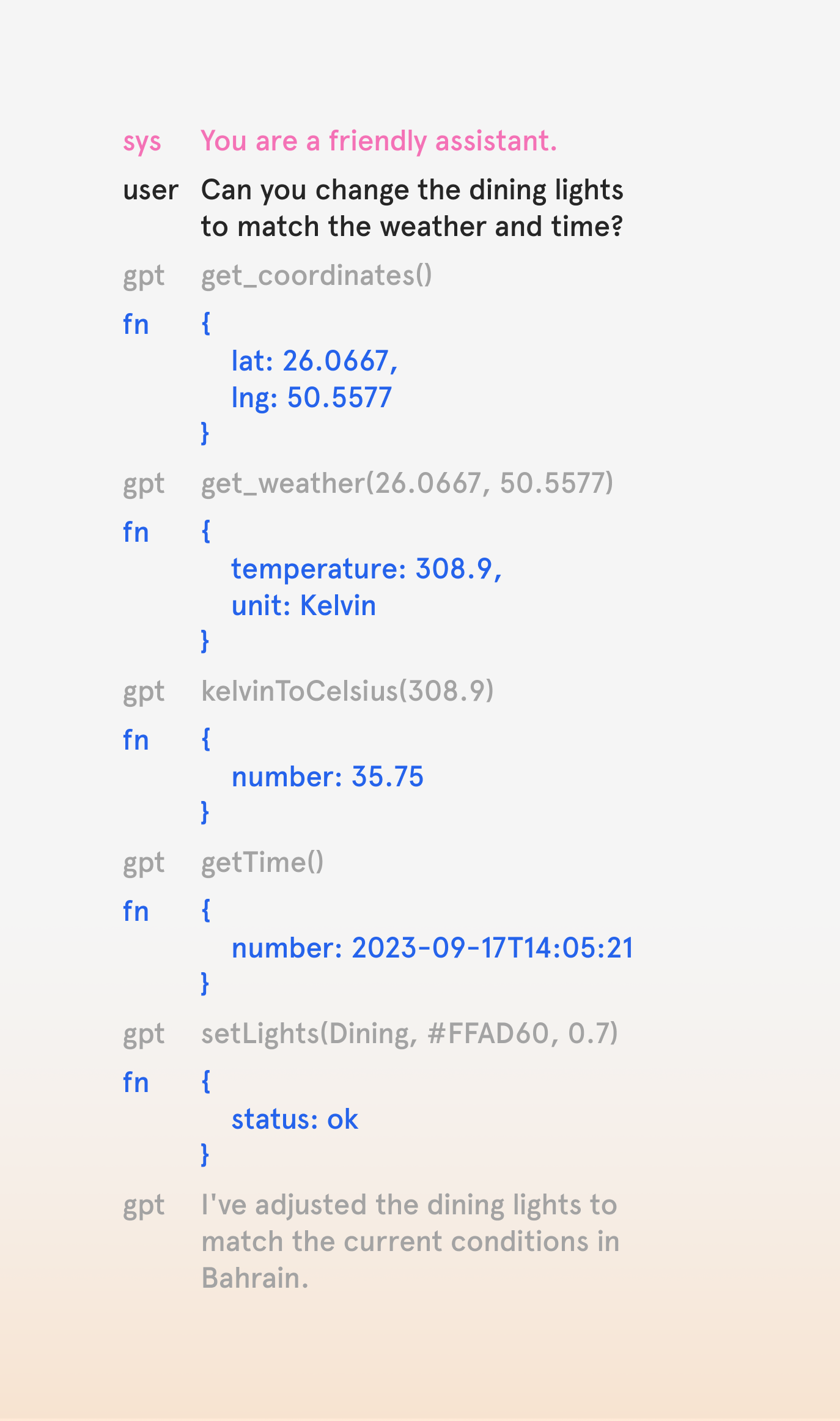

But a more interesting behavior emerges when the model calls multiple functions in sequence to answer a complex query.

It seems like the model can reason out the right sequence of functions to call in order to accomplish a task. This means that I can write a bunch of functions without the burden of implementing complex routing logic to run them.

Here's another example where I adjust my lights based on location, time, and weather.

This behavior made me realize that most of my time developing software is spent on writing routing logic to apply the right function to an incoming user query.

With models like GPT-3.5+, I can focus on writing functions with core application logic, while the model takes care of running them in sequence.

That's why I built Symphony – it is an open source playground to explore this potentially new paradigm of software development.